Opening the Black Box: AI You Can Trust to Detect Financial Crime

Despite widespread recognition that AI can be an effective tool in detecting fraud and financial crime, many investigators at financial institutions (FIs) still do not fully trust its capabilities. As a result, a significant gap exists between the number of institutions that understand the potential of AI and those that use it. At the recent CeFPro Fraud & Financial Crime conference in New York, Hawk AI co-founder and CTO/CPO Wolfgang Berner addressed the obstacles to trusting AI, and the steps that FIs can take to overcome them.

Barriers to trusting AI to detect fraud and financial crime

A lack of transparency is the primary concern with using AI to detect fraud and financial crime. In many instances, AI is a black box. As such, FIs may not fully understand how the AI makes decisions. “There's a lack of transparency in output, but also in how you get the output,” said Berner. FIs will be held accountable for the decisions any AI model makes, so they need full explanations of how the model produces a given output.

Additionally, the lack of governance can cause issues. Without clear guidelines and regulations, FIs may be hesitant to take the risk of using AI. Model complexity is also a concern. The more complex an AI algorithm is, the harder it is to explain—and therefore trust—its output. As a result, some financial institutions may prefer to stick with more traditional detection methods. However, governance principles and technological methods already exist to get FIs over these hurdles.

The Wolfsberg principles for using AI and Machine Learning in financial crime compliance

The Wolfsberg principles are a set of guidelines created by a group of 13 major global banks to address issues with using AI to detect money laundering and terrorist financing. These principles give guidance to overcome many of the barriers to trusting the outputs and decisions of AI. The guidelines focus on transparency in AI algorithms and the need for clear explanations of how the technology is making decisions. Additionally, the Wolfsberg principles emphasize the importance of governance, recommending that financial institutions establish clear policies and procedures for the use of AI in financial crime and fraud detection. By following these principles, financial institutions can increase their confidence in using AI technology.

The Wolfsberg Principles:

- Legitimate Purpose

- Proportionate Use

- Design and Technical Expertise

- Accountability and Oversight

- Openness and Transparency

The Wolfsberg Principles can be implemented into AI technology with two pillars, Explainability of Individual Decisions and Model Governance & Model Validation.

Explainability

Building explainability into AI financial crime detection models is crucial for ensuring transparency and accountability in their decision-making processes. Explainability can be built into AI models by including natural language narratives, decision probabilities, verbalized decision criteria, and contextual valuation, as well as by allowing investigators to give feedback. These factors give investigators the contextual information they need to make case decisions.

cda6.png)

“You don't need to be a data scientist to understand the narratives,” said Berner. “It needs to be human language.”

A common criticism of explainable AI is that it is nice in theory, but that it doesn’t work for more complex models, such as deep learning models. Berner argued that complex models can be explainable, but achieving this goal requires clever engineering paired with subject matter expertise. “Even a very complex model can give explainable output,” said Berner. “We are doing this with billions of transactions in real time.”

Berner gave two examples of complex AI explainability in action: aggregating risk factors and analyzing risk factors as color channels in computer vision models. With data science and compliance expertise, complex models can deliver clear explanations while remaining effective at detecting anomalous transaction behavior.

Model Governance & Model Validation

The second pillar of explainable AI is Model Governance and Model Validation. For appropriate Model Governance, the elements of Traceability and Versioning, Tooling to Support Automation, and Customer Acceptance are essential.

Model Governance

Traceability is a key element of model governance. Traceability means recording information like who trained the data, what data it was trained with, how it was tested, and what data it was tested with. This allows operators to understand the decision-making process of the AI model. Explainable models leave an audit trail for every decision, allowing operators to identify and fix errors, improve accuracy, and ensure that the model is fair and unbiased. Similarly, versioning allows for tracking changes, comparing different versions, and maintaining control over models. With versioning, developers can roll back to a previous version of their AI model or data if a new version contains errors or produces unintended results.

Tooling also plays a critical role in implementing and enforcing model governance processes. With the increasing complexity and scale of machine learning models, it is impractical to manually manage and monitor all aspects of model governance. Utilizing tools that automate these processes can help ensure that the model governance is consistent, transparent, and effective. It also mitigates the risks associated with human error, inconsistencies, and bias in model development and deployment.

Customer acceptance is another essential piece of model governance. If the expertise and input of a financial institution’s compliance team isn’t included in the process, the models will not be effective at detecting financial crime. The marriage of technical know-how and financial crime expertise ensures the most effective AI deployment possible.

“The client needs to be in the driver seat for acceptance,” said Berner. “They need to look at the model and say, okay, this is the process of how we got the results. These are the results, and this is the comparison if we switch from one version to the other.”

Model Validation

To use AI to detect financial crime, Model Validation should be constant. FIs and AI developers work together to set model benchmarks. Then, as the AI models produce results over time, they can compare the results against the benchmarks. To monitor the results, operators need to take specific samples from the data. This sampling should be automatic and ongoing as the model operates. It should also include both above-the-line and below-the-line sampling. This ensures accuracy in results on either side of predetermined thresholds, further validating the AI models.

“You need to look at KPIs that are relevant for the customer,” said Berner. “For example, what does the risk factor distribution look like? You establish the KPIs and then you monitor it over time.”

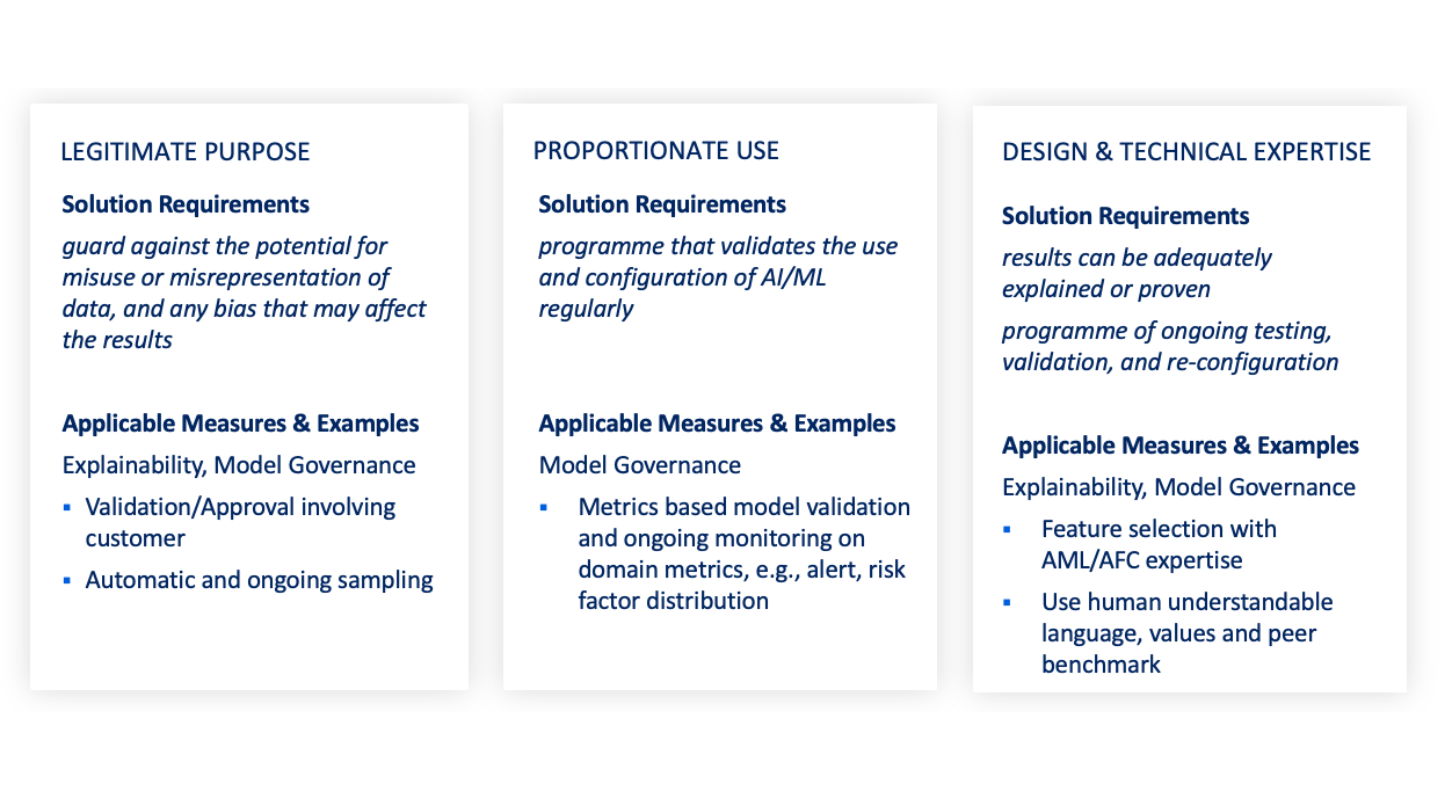

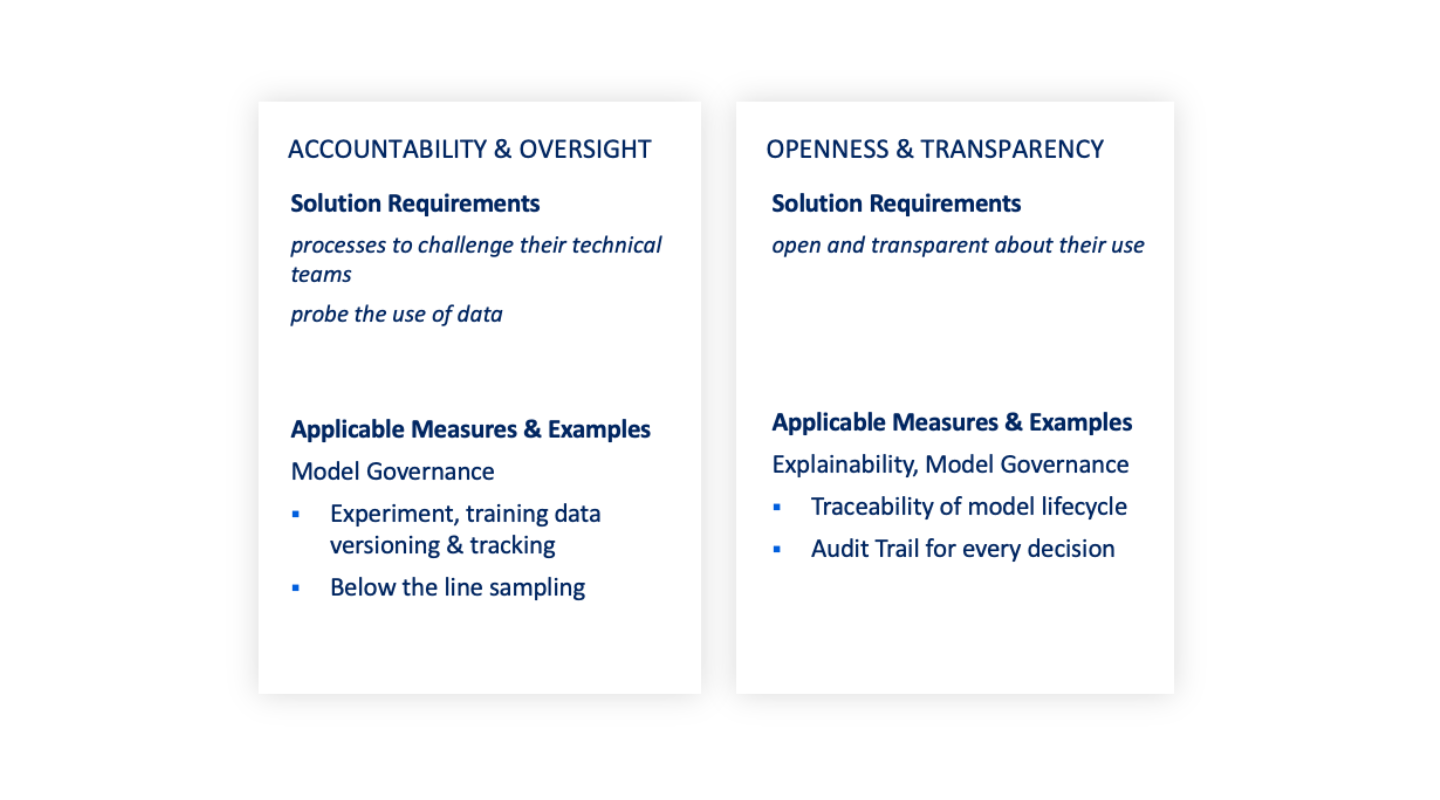

Measures Mapping the Wolfsberg Principles

In addition to outlining the Wolfsberg principles and the Whitebox AI pillars of Explainability and Model Governance & Model Validation, Berner also discussed several measures that AI developers can take to apply them. You can see these measures, as well as the relevant principles and Whitebox AI pillars, in the diagrams below:

The continued development of Whitebox AI is crucial for promoting trust and transparency in AI systems, enhancing regulatory compliance, and facilitating effective financial crime detection. As exemplified by the Wolfsberg Principles and the pillars of Explainability and Model Governance & Model Validation, it is possible to create AI models that are transparent, interpretable, and easily understood and audited by human operators. As Berner said, closing his presentation, “Whitebox AI isn’t just possible, it’s here.”