Case Study: False Positive Reduction & Auto-Closing

Most financial institutions know that throwing people at compliance problems is an unsustainable solution, particularly as their business grows. Scaling headcount is an expensive, complex bottleneck that masks an underlying technology problem. Company A, a global payments processor that handles over one billion payments per year in dozens of markets, uses modern AML technology, powered by explainable AI, to solve that problem. While already using Hawk AI’s rules and anomaly detection AI to find suspicious activity and file SARs, Company A wanted to further improve effective financial crime detection without placing an additional burden on their teams. Now, Company A enjoys the results of False Positive Reduction and Auto-Closing: a 97.3% reduction in false positives, a 51.4% true positive rate, reasonable thresholds, improved case relevance, manageable caseloads, explainable auto-closing, a risk-based approach, and quality control.

The Company

Industry: Payments

Annual Transaction Volume: ~2 billion

Countries Served: 37

Merchants Served: 400

Payment Methods: 700

Hawk AI Solution spotlights: Auto-Closing, False Positive Reduction, Anomaly Detection

The Problem: Regulatory Risk "Black Holes"

Even with anomaly detection in use, the company had several vulnerabilities to regulatory risk. The company had a small team of compliance analysts with a limited amount of time for case review. With caseloads in the tens of thousands, resources were stretched thin. To keep workloads manageable, they set high case thresholds that matched team capacity and risk experience. However, this approach did not help the team during audits, where they would have a difficult time justifying thresholds that may miss cases that warrant further investigation. An auditor would not consider capacity a valid explanation for these thresholds. In response, the regulator would likely require the company to hire more compliance analysts, which would increase overhead significantly.

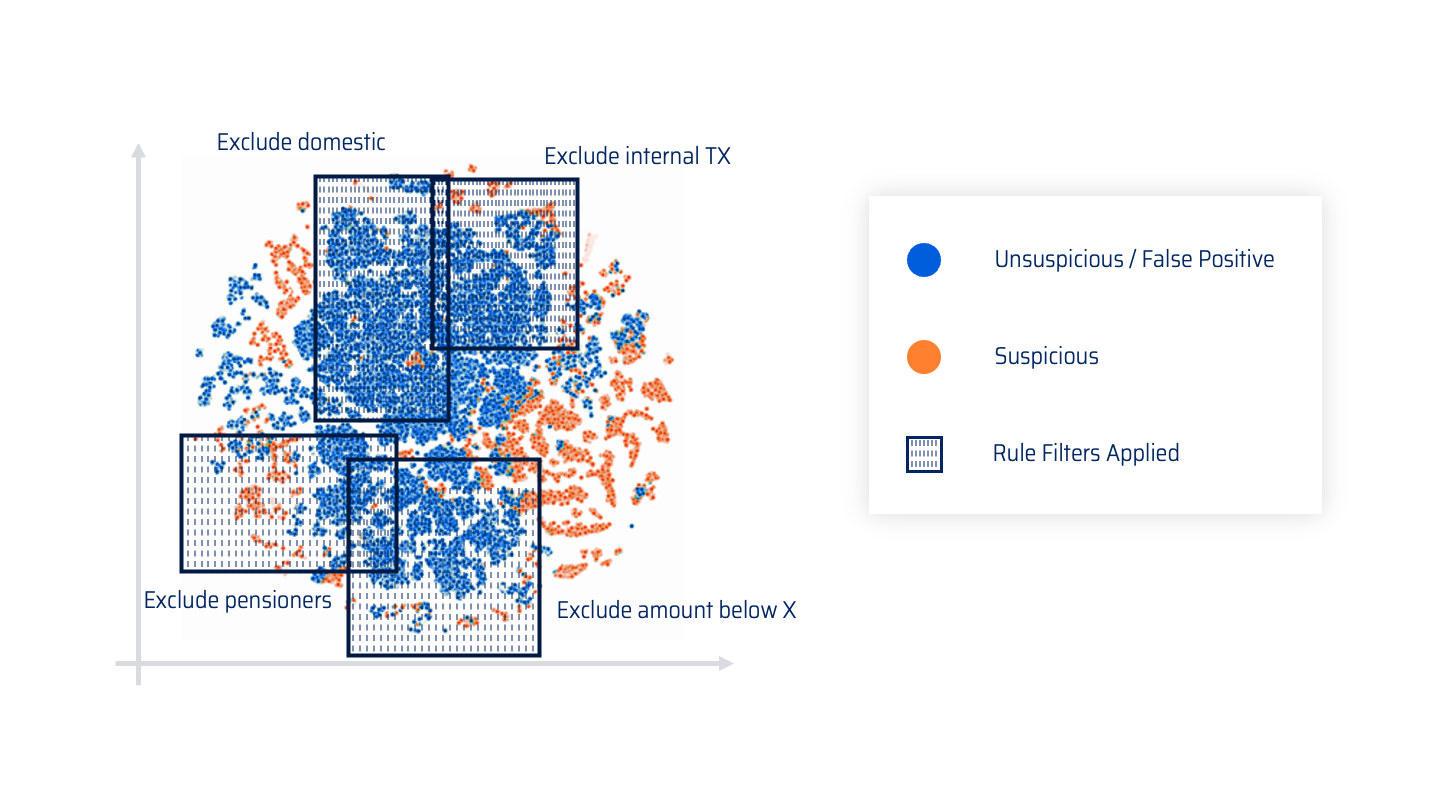

Capacity-based thresholds also limited the diversity of cases and merchants the team could review. The traditional method for dealing with capacity issues is creating rule exceptions, or filters. This allows analysts to look at smaller samples of the data. However, this approach creates massive risk “black holes” by excluding transactions that require scrutiny. It reduces false positives, but also leaves out suspicious activity as well. Even with rule exceptions in place, the company’s investigative team was overwhelmed by false positive cases. Without a solution to these problems, the company ran a higher risk of missing true positives and failing to meet regulatory requirements.

The Solution: Context-Aware False Positive Filtering

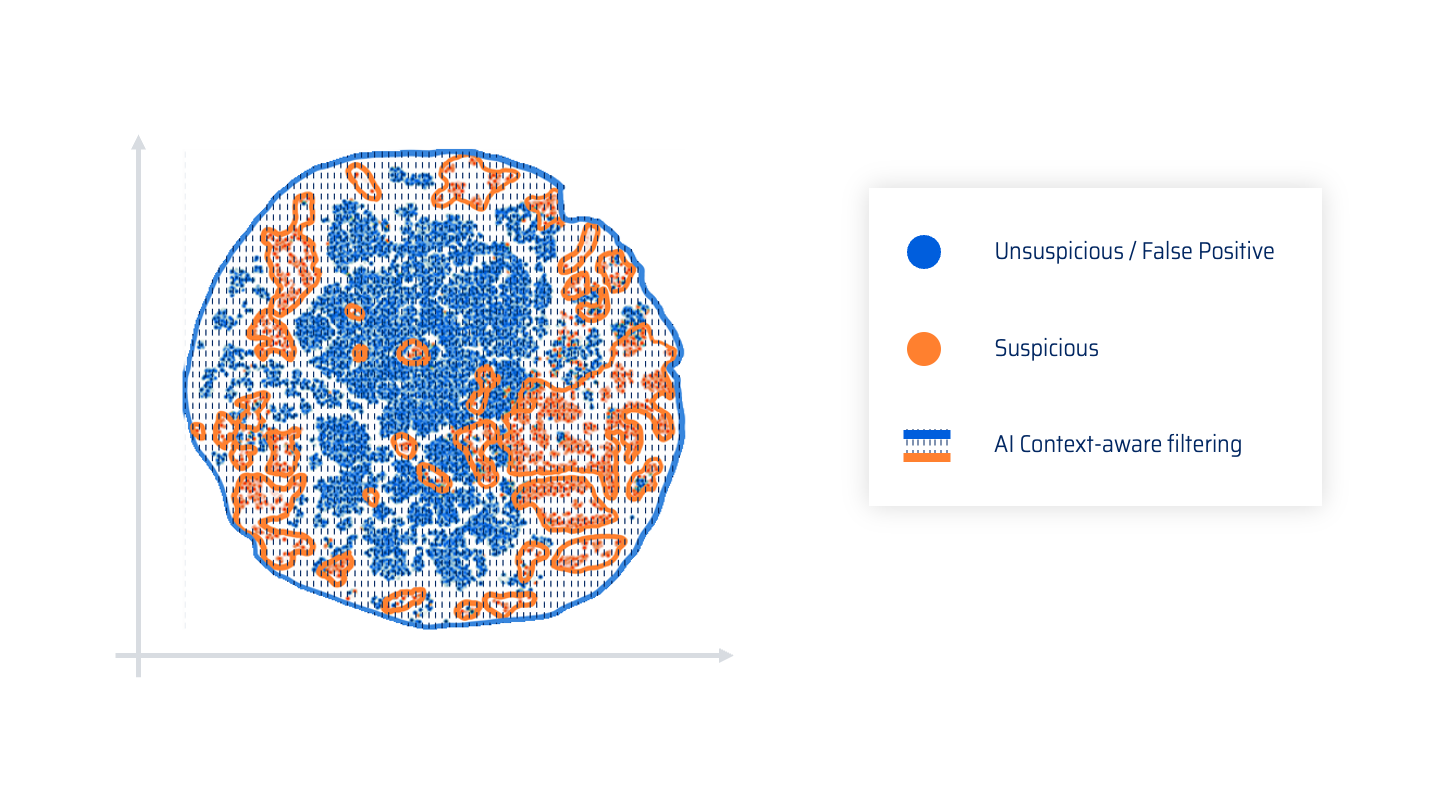

Company A employed Auto-Closing with False Positive Reduction to solve its capacity problem and mitigate regulatory risk. Now, every time there is a rule hit, the False Positive Reduction model evaluates it within the context of the case. The model determines whether the pattern of behavior is normal despite the risk. Once it makes that decision, it either automatically closes the case or escalates it to an analyst. Regardless of the decision the model makes, it leaves an explanation of the reasoning employed with natural language. Rather than intentionally limiting cases for review based on team capacity, Company A can let the model generate many cases; the False Positive Reduction model functions as a hyper-productive “virtual" team member that automates the first line of case review with contextual filtering.

“Using a context-aware AI for filtering is much more effective. It can create better decision boundaries and isolate suspicious activity from false positive cases,” said Wolfgang Berner, Co-founder and CTO/CPO of Hawk AI. “It can compare a transaction against historic customer behavior and the customer's peer group to understand what is normal. This gives much better and less risky results than the traditional approach.”

The Results

Reasonable Risk Thresholds

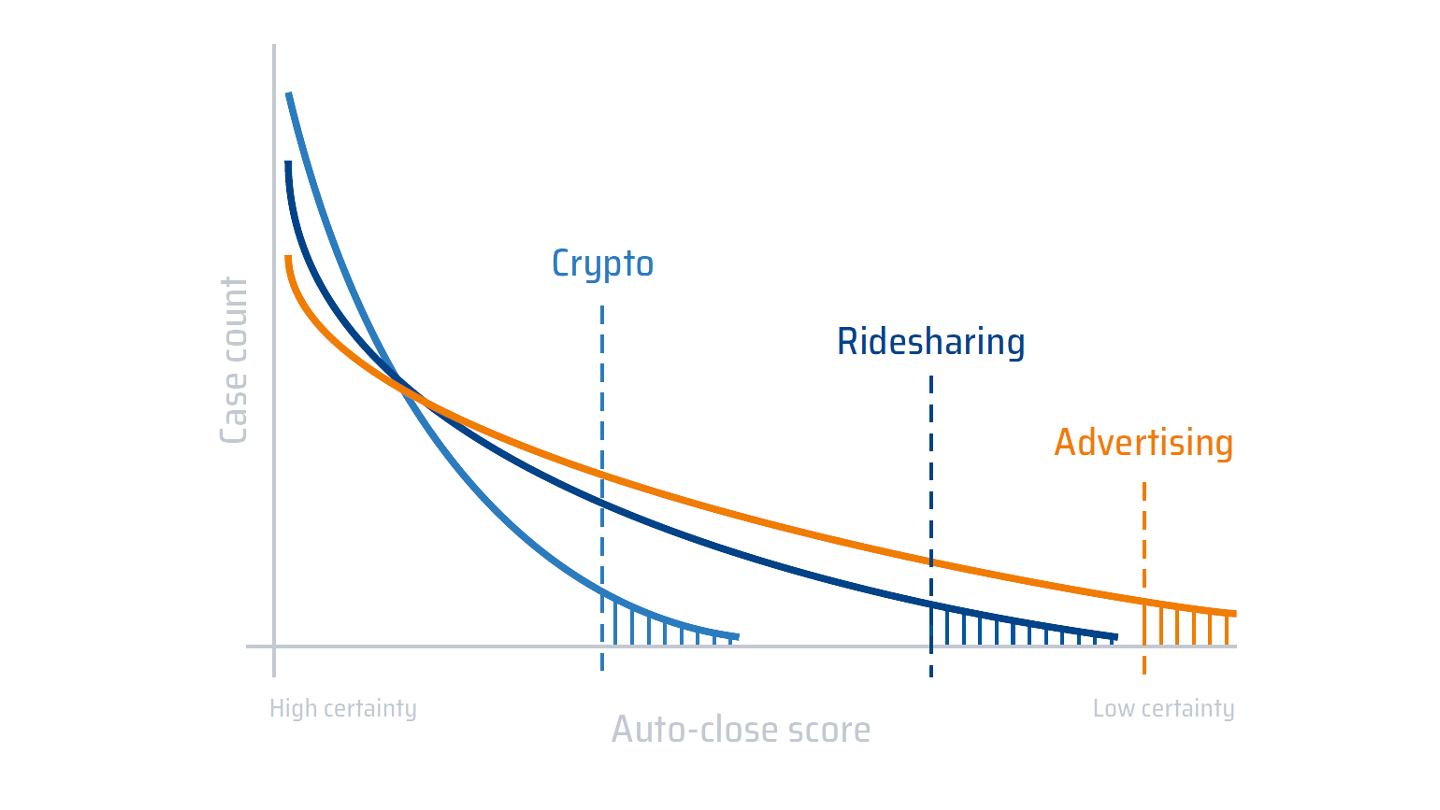

With Auto-Closing, the team sets case thresholds based on actual risk, not personnel capacity. The compliance team based their new thresholds on their own standards of suspicious activity, justified with real examples. For instance, the team may have set a threshold for rideshare use at 10 ride transactions per day, based on their ability to review cases. The idea of anyone taking that many rides in a day is somewhat absurd; now that they use the False Positive Reduction model to automatically close some cases, they can set that threshold at a much more reasonable two ride transactions per day. The model intelligently filters out cases that don’t meet all the criteria for suspicious activity, generating natural language explanations. The analysts spend more of their time reviewing quality cases. Reasonable thresholds also allow compliance analysts to view more case types, increasing the likelihood of detecting true suspicious activity.

Improved Case Relevance and Manageable Caseloads

Teams with limited human resources need to spend their time looking at relevant cases – cases where actual instances of financial crime are more likely. When the model automatically closes false positives, it drastically increases the relevance of the cases that they review. This minimizes the company’s regulatory risk exposure and leverages its existing compliance resources. With the enhanced case relevance, the company no longer needs to hire additional staff to review more cases. The model empowers the company to do more with less, complying with regulations and effectively detecting suspicious activity. When you look at the right cases, you don’t need to look at more cases.

Without the False Positive Reduction model, setting appropriate thresholds often results in heavy caseloads. The burden of unmanageable caseloads can overwhelm teams of any size. When the model automatically closes low-quality cases, the team can set more appropriate case thresholds. Now, the team can review the same number of cases, but they only look at the cases that are more likely to be suspicious. The company doesn’t have to drastically increase the size of the team, and the team doesn’t get overburdened with heavy caseloads.

Auto-Closing with Explanations

Audits can cause a major headache when you don’t have your bases fully covered. The lack of explanations for auditing purposes has been a common concern with black box AI solutions. With explainable AI that takes a white box approach, the model will generate detailed, natural-language explanations any time a case is auto-closed. The AI leaves a paper trail that the compliance team will have at hand for any audits or reviews. With the necessary contextual information readily available, the team will be able to justify the decisions they made based on the output of the model.

Risk-Based Approach

Auto-Closing is complementary to the company’s risk-based approach. It empowers them to focus their resources in high-risk areas. Auto-Closing gives the team more control over what cases they review, and what kind of cases they review. The team can set up and change thresholds based on the risk factors of a given industry, or any other segmentation they choose. They can increase or decrease a threshold for one segment without affecting the other rules they have already set. They can also adjust the model to review cases from underrepresented segments as needed.

Quality Control

HAWK:AI technology also allows the team to take a “trust but verify” approach to Auto-Closing. The team can randomly sample cases closed by the model whenever they need. These closed cases include explanations for the decision to close, broken down by risk factor. With this information at hand, the team can evaluate whether the model is working as it should and make any adjustments necessary. The AI can also incorporate case relevance feedback from analysts into the model to improve its effectiveness over time.